Contain the Damage

5. Prevention & Mitigation Strategies

Series: "When Models Talk Too Much - Auditing and Securing LLMs Against Data Leakage"

We’ve spent the last few posts in this series discussing how to audit models and detect when they are spilling secrets. That’s necessary work, but it’s reactive. If you are relying solely on detection, you are essentially waiting for a car crash so you can analyze the skid marks.

In production environments, our goal is to shift left. We need to move from detecting leaks to architecting systems where leakage is statistically improbable. We can’t rely on the model to "behave" because LLMs are probabilistic engines, not logic gates. You cannot prompt-engineer your way into perfect security.

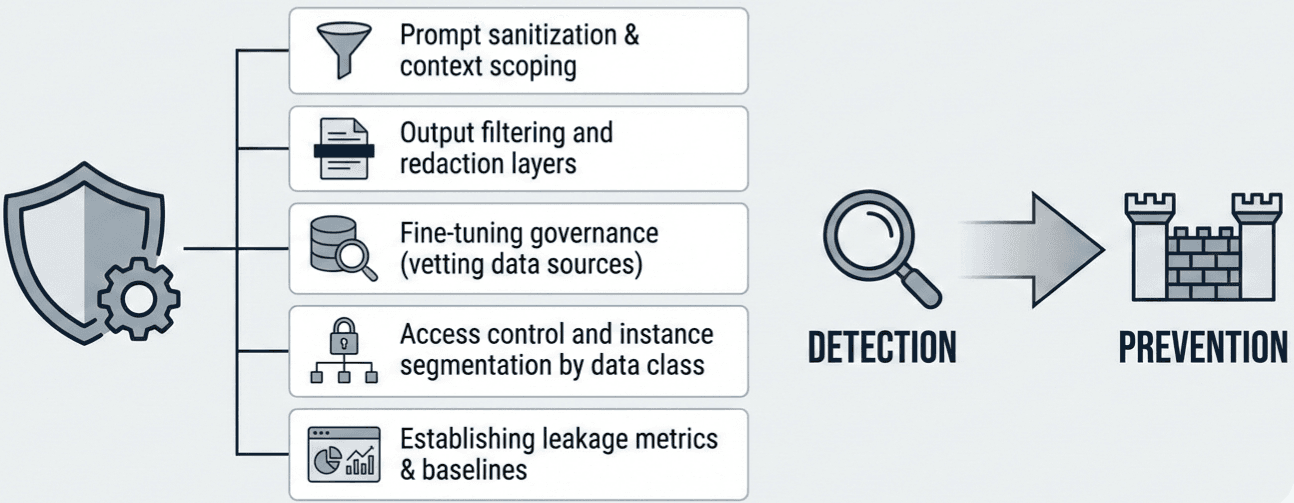

Instead, we build guardrails. Today, we are looking at the engineering and governance controls required to contain the damage before it starts.

1. Input Hygiene: Prompt Sanitization and Context Scoping

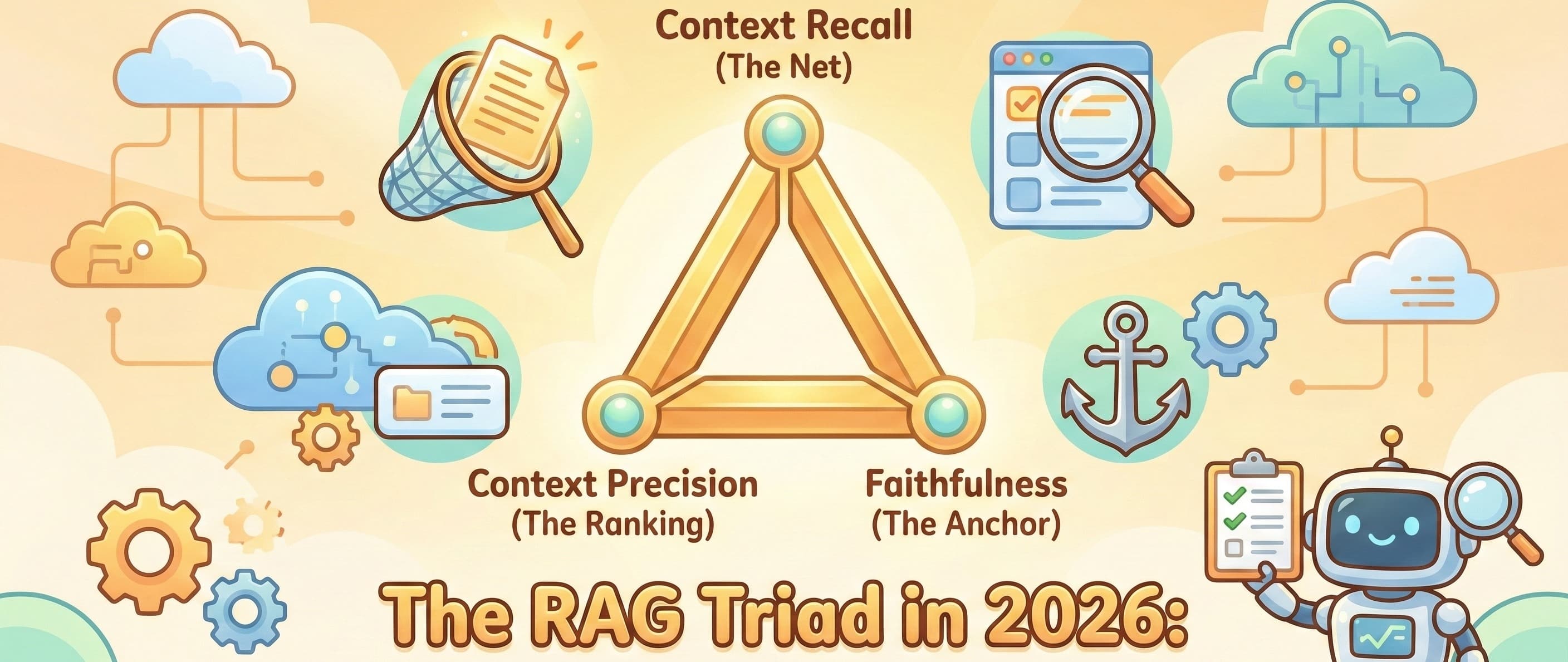

The most effective way to prevent an LLM from leaking sensitive data is to ensure it never sees that data in the first place. This seems obvious, yet it is the most frequent failure point in enterprise RAG (Retrieval-Augmented Generation) systems.

The RAG Risk: In a typical RAG setup, the application retrieves documents relevant to a user query and stuffs them into the context window. If your retrieval system doesn't respect Access Control Lists (ACLs), you are effectively laundering permission-gated data through the LLM. A junior employee asks, "What is the budget for Project X?" and the retriever pulls a document they shouldn't have access to, feeds it to the model, and the model summarizes it. The model didn't fail; your architecture did.

The Fix: Context Scoping

ACL Propagation: The retrieval query must carry the user’s permissions. If User A cannot read Document B in SharePoint, the vector database should never return Document B for User A’s query.

PII Scrubbing at Ingestion: Sanitize prompts before they hit the model API. Use presidio libraries or regex layers to detect patterns (SSNs, API keys, credit card numbers) in the user input. If a user pastes a log file containing an API key, strip it before the model processes it.

2. The Output Layer: Filtering and Redaction

Even with perfect input hygiene, models trained on internal data (or public data that inadvertently contains private info) can hallucinate or recall memorized PII. You need a rigorous exit gate.

This is your last line of defense. It sits between the LLM and the user.

Deterministic Rules: Do not use an LLM to police another LLM if you can avoid it. Use deterministic logic. If the output contains a string matching the regex for your internal project codes or customer IDs, redact it automatically.

Named Entity Recognition (NER): Deploy a lightweight, specialized NER model (like a small BERT or spaCy model) strictly for the output stream. It should be tuned to identify names, locations, and organizations. If the confidence score of a sensitive entity is high, block the response or mask the entity.

Refusal Beacons: Train your application to recognize when the model is refusing a request. Sometimes a "jailbreak" attempt results in a partial refusal followed by the leaked data. If the output starts with standard refusal boilerplate, cut the generation stream immediately.

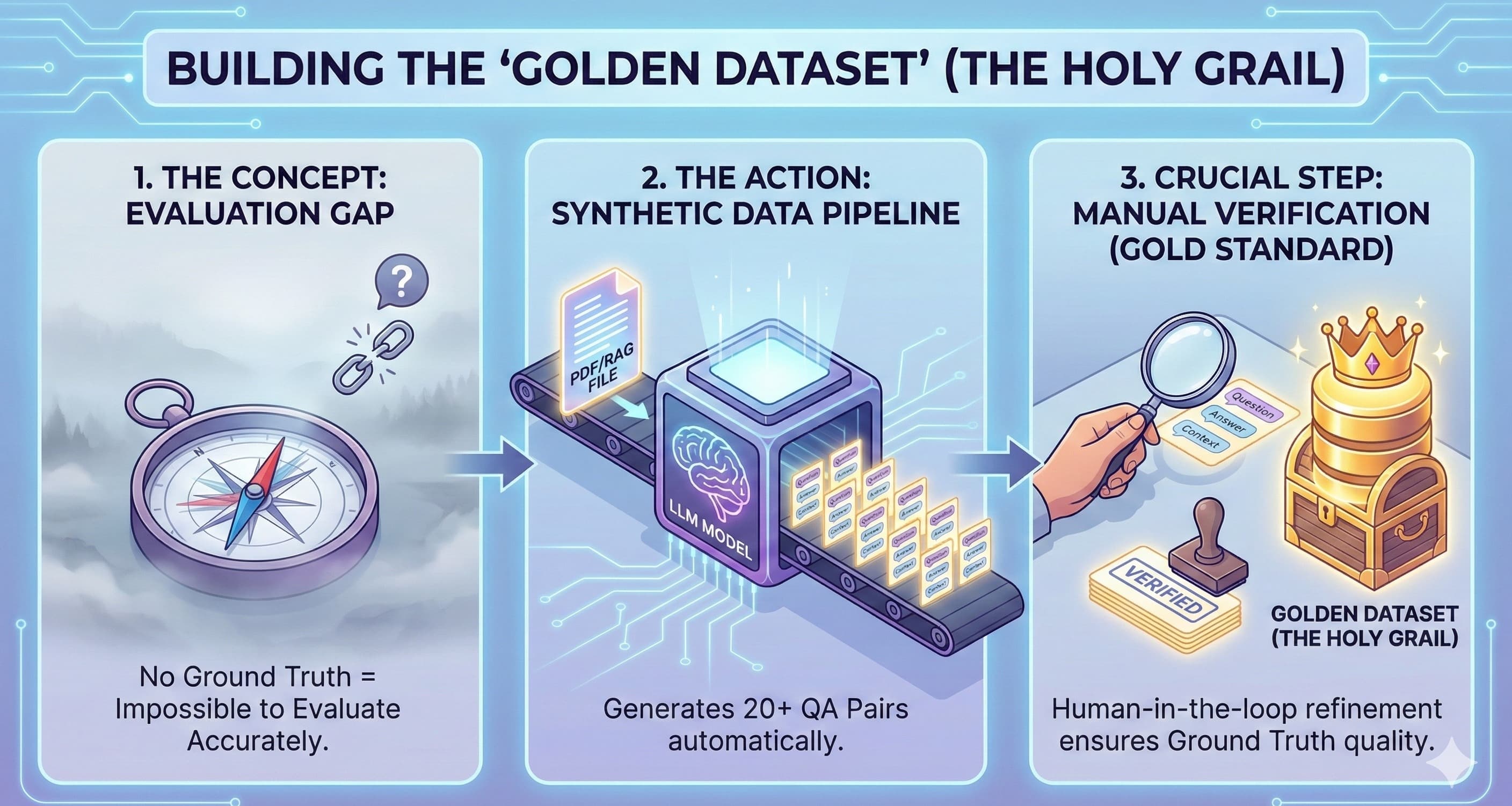

3. Fine-Tuning Governance: Vetting the Source

If you are fine-tuning models (e.g., Llama 3 or Mistral) on your own data, you must accept a hard truth: LLMs memorize training data.

There is currently no reliable way to "unlearn" a specific data point once a model weights have been updated without retraining or complex model editing. Therefore, governance happens before training.

Data Class Segmentation: Do not dump all corporate data into a single fine-tuning bucket. Segment data by classification level. A model trained on "Public Marketing Data" is safe for a chatbot. A model trained on "HR Records" is not.

The "Canary" Test: Before deploying a fine-tuned model, perform membership inference attacks. Inject "canary" data (fake secrets) into the training set and see if you can prompt the model to reproduce them verbatim. If it spits out the canary, it will spit out real secrets.

4. Access Control and Instance Segmentation

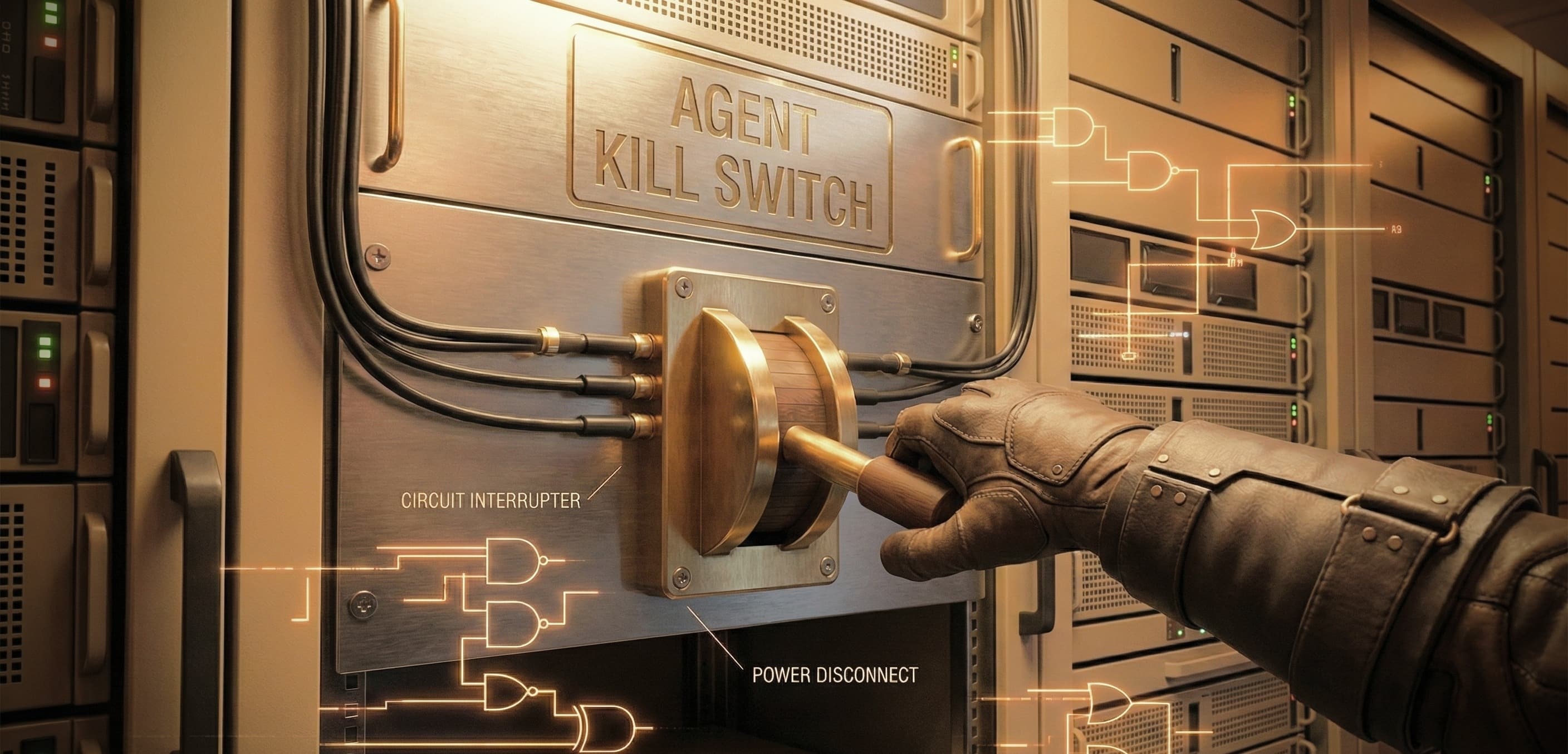

We need to stop treating "The Model" as a monolithic entity that everyone in the company accesses. In mature engineering organizations, we are moving toward instance segmentation.

Role-Based Instances: Instead of one giant

Company-GPT, deploy scoped instances. The "Finance-Bot" has a system prompt and retrieval scope limited to finance data and is only accessible by the finance team.Rate Limiting & Anomaly Detection: Data exfiltration takes time and bandwidth. If a single user account is sending high-entropy prompts at 10x the normal speed, or if the output token count suddenly spikes for a specific user, trigger a circuit breaker.

5. Establishing Metrics and Baselines

You cannot govern what you cannot measure. "We feel secure" is not a metric.

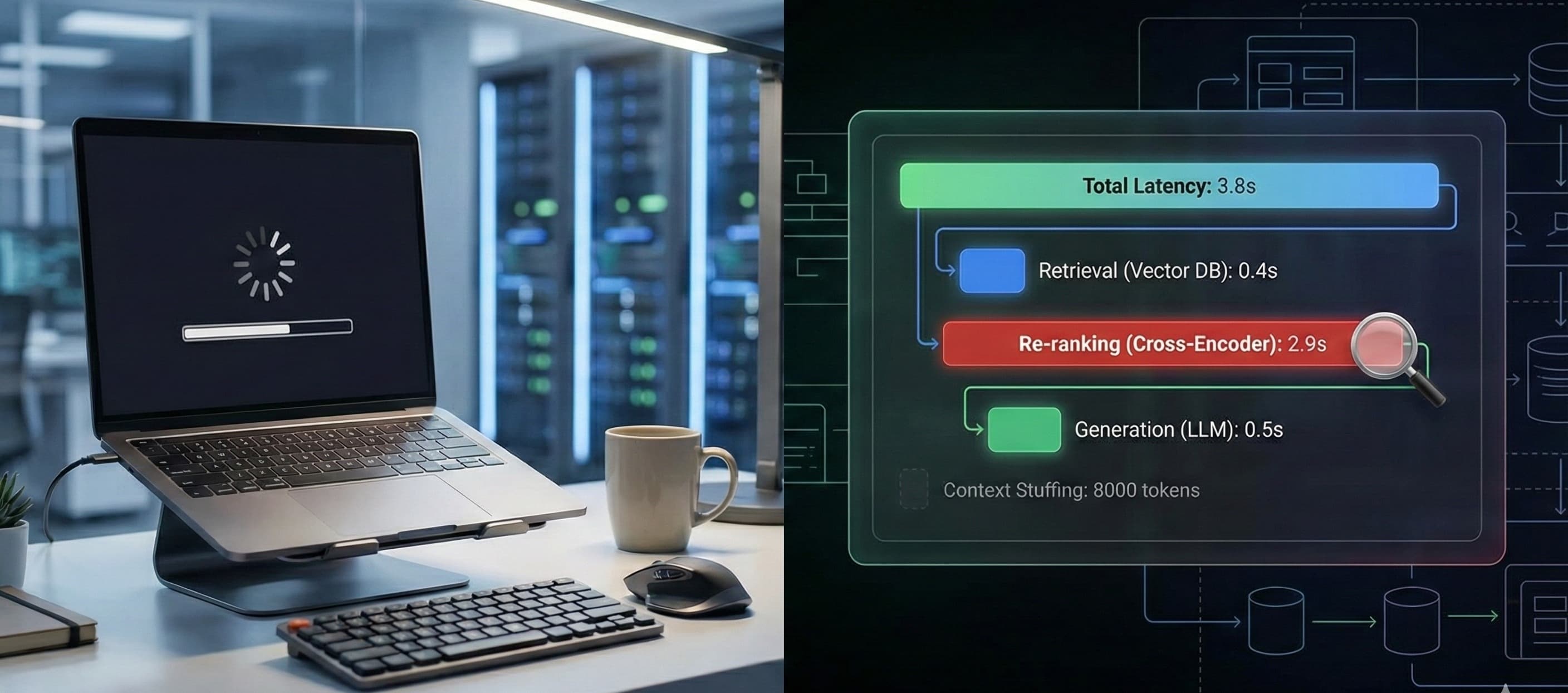

Leakage Rate: In your automated regression testing (you have that, right?), what percentage of adversarial prompts successfully extract PII? This number should be trending toward zero.

False Positive Rate: How often are your output filters blocking legitimate business responses? If this is too high, users will find shadow-IT workarounds.

Latency Cost: Security adds latency. Measure the overhead of your PII scrubbing and NER layers. You need to find the balance between "instant response" and "secure response."

Conclusion

Securing LLMs is not about finding a magic prompt that makes the model honest. It is about wrapping the probabilistic core of AI in deterministic layers of traditional security.

Treat the LLM like an untrusted user. Sanitize what you give it, filter what it gives you, and never assume it understands the concept of "secret."